Back to all products

Software

NVIDIA NIM Microservices

Price on request

MOQ — Lead time on requestCurrency INR

Highlights at a glance

- ✓Category: Software

- ✓Made in Bangalore, KA, India

- ✓Certified: ISO 26262, IEC 61508

About this item

- Accelerate AI Deployment With NVIDIA NIM

- Enterprise Generative AI That Does More for Less

- Easy, enterprise-grade microservices built for high-performance AI

- Deploy enterprise-grade microservices that are continuously managed by NVIDIA

- Improve TCO with low-latency, high-throughput AI inference that scales with the cloud

- Deploy anywhere with prebuilt, cloud-native microservices

Description

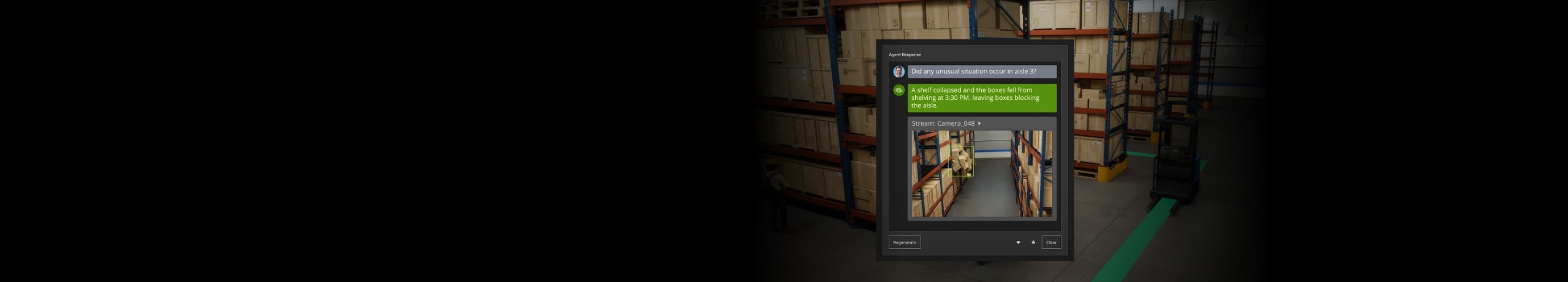

Designed for rapid, reliable deployment of accelerated generative AI inference anywhere. NVIDIA NIM combines the ease of use and operational simplicity of managed APIs with the flexibility and security of self-hosting models on your preferred infrastructure. NIM microservices come with everything AI teams need—the latest AI foundation models, optimized inference engines, industry-standard APIs, and runtime dependencies—prepackaged in enterprise-grade software containers ready to deploy and scale anywhere.

Overview

NVIDIA NIM™ provides prebuilt, optimized inference microservices for rapidly deploying the latest AI models on any NVIDIA-accelerated infrastructure—cloud, data center, workstation, and edge.

Sovereign AI Agents Think Local, Act Global With NVIDIA AI Factories

Validated design for AI factories pairs accelerated infrastructure with software, including new NVIDIA NIM™ capabilities and an expanded suite of NVIDIA blueprints.

Free Development Access to NIM

Get access to unlimited prototyping with hosted APIs for NIM accelerated by DGX Cloud, or download and self-host NIM microservices for research and development as part of the NVIDIA Developer program.

Accelerate AI Deployment With NVIDIA NIM

NVIDIA NIM combines the ease of use and operational simplicity of managed APIs with the flexibility and security of self-hosting models on your preferred infrastructure. NIM microservices come with everything AI teams need—the latest AI foundation models, optimized inference engines, industry-standard APIs, and runtime dependencies—prepackaged in enterprise-grade software containers ready to deploy and scale anywhere.

Benefits

Enterprise Generative AI That Does More for Less

Easy, enterprise-grade microservices built for high-performance AI—designed to work seamlessly and scale affordably. Experience the fastest time to value for AI agents and other enterprise generative AI applications powered by the latest AI models for reasoning, simulation, speech, and more.

Ease of Use

Accelerate innovation and time to market with prebuilt, optimized microservices for the latest AI models. With standard APIs, models can be deployed in five minutes and easily integrated into applications.

Enterprise Grade

Key attributes

Certificates

ISO 26262

IEC 61508

Reviews

Questions & answers

Ask the supplier — answers are visible to all buyers

No questions yet. Be the first to ask!